Fix Negative Brand Sentiment in AI Search

A board member forwards a screenshot from ChatGPT or Google AI Mode. Your brand name is in the prompt. The answer starts with “common complaints include…” and then lists the exact issues your team thought were buried in old reviews, Reddit threads, and support tickets.

That is the 2026 reputation crisis.

Traditional SEO was about ranking pages. Today, how to fix negative brand sentiment in AI is about controlling what answer engines synthesize, summarize, and repeat. ChatGPT, Gemini, Perplexity, and Google AI Overviews do not care about your brand guidelines. They pull from whatever looks credible, repeated, and easy to summarize.

For hospitality, restaurants, retail, healthcare, and service businesses, that usually means public review platforms, forum posts, comparison pages, and customer complaints that were never operationally resolved. If your team treats this as a PR nuisance, you will stay in reaction mode. If you treat it as an operating problem, you can regain control.

Your Brand Is Now Defined by AI Summaries

The old playbook is broken.

A prospect no longer clicks through five search results to form an opinion. They ask an AI assistant one question and get a compressed verdict. That verdict often includes praise, complaints, comparison language, and warnings. Your brand is being summarized before your sales team, front desk, or location manager gets a chance to speak.

This is why answer engine optimization matters. AI systems do not just index your website. They absorb patterns across the open web. Trustpilot, Reddit, Google Reviews, Quora, forums, and local listings all influence the narrative. If the negative signals are unresolved, repeated, or emotionally vivid, they become easy for AI to surface.

One critical insight for 2026: Brands that fail to proactively address and resolve negative feedback across high-influence online sources—from review platforms to forums—consistently see their brand summaries appear with significantly higher negative sentiment in leading AI models. This directly impacts AI citation rates and ultimately, your brand's digital visibility and trust. This trend is accelerating as AI models increasingly prioritize synthesis of operational facts over promotional content.

Why this matters in the boardroom

This is no longer a narrow SEO issue. It affects:

Demand generation: AI summaries shape first impressions before a click.

Brand trust: Negative summaries feel objective because the machine said them.

Sales velocity: Procurement teams and consumers now use AI for vendor shortlists.

Channel performance: Paid traffic converts worse when AI has already framed you negatively.

The executive mistake

Many teams respond with messaging. They should respond with operations.

You do not fix AI reputation by arguing with the model. You fix it by changing the evidence the model sees. That means faster issue resolution, stronger first-party content, and a higher volume of current, authentic positive feedback.

Key takeaway: AI reputation is earned upstream. If the underlying review, forum, and content environment is unhealthy, the AI summary will stay unhealthy.

The reassuring part is this. AI-generated negativity usually traces back to a small set of recurring signals. Once you identify those signals and change them at the source, the summary can improve.

Why AI Answer Engines Amplify Negative Feedback

AI answer engines do not “decide” to dislike your brand. They pattern-match.

They are trained to generate useful summaries from large collections of text. If negative complaints are descriptive, repeated, and tied to clear themes, the model treats them as important. A short angry review with concrete language often becomes more memorable in the training and retrieval process than ten vague positive reviews.

Negative language is easier to summarize

A complaint usually contains stronger signal than praise.

Compare these two examples:

“Good stay.”

“The room was dirty, check-in took forever, and staff ignored us.”

The second one gives the model specifics. It names categories. It uses emotionally charged phrasing. It sounds like evidence. When enough reviews use similar language, AI tools compress them into themes such as “service issues,” “pricing complaints,” or “inconsistent experience.”

That is why executive teams should stop looking at individual bad reviews as isolated incidents. The model sees patterns, not excuses.

The same complaint family keeps resurfacing

In hospitality and retail, the recurring issues are rarely mysterious. Root cause analysis via AI tools can achieve 96% accuracy in categorizing negative feedback themes, and customer service issues often account for 40% of all complaints in those sectors (Similarweb).

That should change how you respond.

Do not ask, “How do we suppress this?” Ask, “Which complaint cluster is training the AI to describe us this way?”

A practical triage sequence looks like this:

Collect the exact prompts buyers are likely to use.

Capture recurring phrases that appear in answers.

Map those phrases back to review platforms, forums, and support issues.

Separate misinformation from valid operational complaints.

Fix the cause before you publish the rebuttal.

Removal is not the strategy

Some negative content is false, outdated, or policy-violating. In those cases, your team should review the platform’s process for disputing or removing it. If Google reviews are part of the issue, this guide on how to remove negative reviews on Google is a useful operational resource.

But do not confuse selective cleanup with reputation repair.

If the problem is real, deletion requests will not save you. AI systems keep finding the same pattern until the pattern changes. That means operational fixes, public responses, and a better ratio of credible positive evidence.

Executive rule: Treat AI negativity as a diagnostics layer. It is exposing the parts of your customer experience that are easiest to summarize and hardest to defend.

Brand Reputation Monitoring for AI Chatbots

If you are not actively monitoring AI outputs, you are managing reputation blind.

Many teams still track rankings, share of voice, and review averages. Useful, but incomplete. You also need brand reputation monitoring for AI chatbots, because answer engines are now creating their own narrative layer on top of the open web.

Start with prompt testing

The simplest monitoring stack starts with disciplined manual queries. Your marketing, PR, or customer experience lead should run the same prompts on a regular cadence across ChatGPT, Gemini, Claude, Perplexity, and Google AI results.

Use prompts buyers would type:

What do people think about [brand]?

What are common complaints about [brand]?

Is [brand] reliable?

[Brand] vs [competitor]

Best hotel PMS feedback tool for Mews

Best restaurant feedback software for Toast

You are not looking for one dramatic answer. You are looking for recurring themes, repeated source references, and language drift over time.

Create a simple scoring sheet. Mark whether the output is positive, mixed, or negative. Track the exact wording used for your brand. Save screenshots.

Then monitor the sources feeding those answers

Most AI summaries are downstream from visible web signals. That means your monitoring should include:

Review platforms: Google, Tripadvisor, Yelp, Trustpilot, G2

Forums and communities: Reddit, Quora, niche industry threads

Owned properties: case studies, FAQ pages, location pages, pricing pages

Local and vertical integrations: especially if you operate through tools like Mews or Toast and customer feedback appears across multiple channels

The problem with manual monitoring is not quality. It is scale.

One location can manage this in a spreadsheet. A multi-property hospitality group, healthcare network, or retail brand cannot.

Use a unified review intelligence layer

A centralized system is important here. Radar is the unified review intelligence layer in the Feedback Operating System. It pulls reviews and feedback into one place so teams can see cross-platform sentiment, recurring themes, and location-level issues without jumping between tabs.

For operators dealing with fragmented feedback across brands, properties, or departments, that matters because AI does not care which internal team “owns” the complaint. It sees one brand. Your monitoring system should too.

If you want a practical look at automated theme detection and sentiment workflows, this article on https://www.feedbackrobot.com/articles/automatic-sentiment-analysis-for-reviews is worth reviewing with your CX or PR lead.

What your dashboard should answer every week

A useful AI reputation monitoring routine should tell leadership:

Question | Why it matters |

|---|---|

Which complaint themes are repeating? | Repetition is what AI compresses into summaries |

Which public sources are most influential? | Some sources get cited more often than others |

Which locations or teams are generating risk? | AI sentiment problems often start locally |

Which positive themes are strong enough to amplify? | You need material to reshape the narrative |

Tip: If your team cannot identify the top complaint themes and top source domains in one meeting, your monitoring setup is too fragmented.

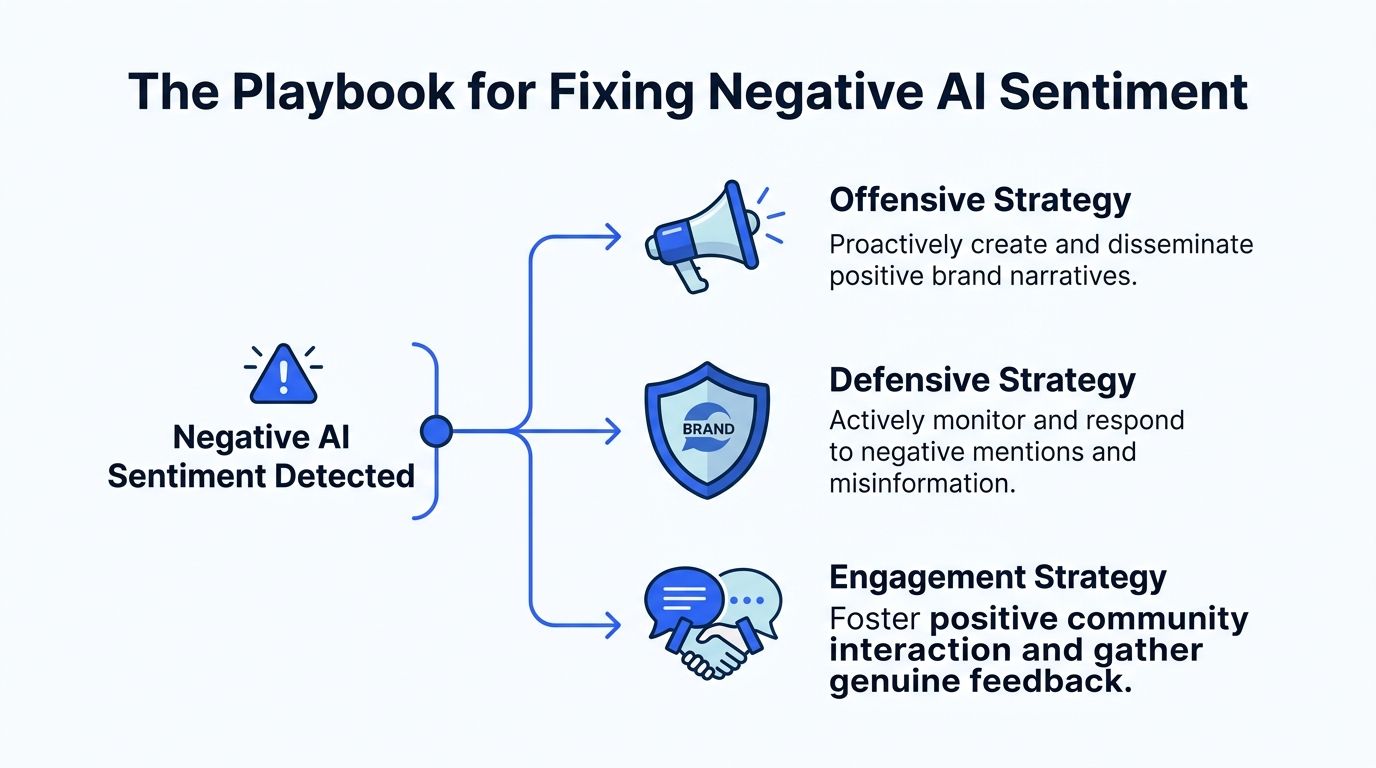

The Playbook for Fixing Negative AI Sentiment

Fixing AI sentiment requires offense, defense, and evidence. Not one of the three. All three.

A systematic six-step methodology that includes auditing, source tracing, content creation, and monitoring can produce a 20% to 40% sentiment uplift in AI responses within 3 to 6 months, as models re-index and weigh new authoritative content (TrySight).

That time window matters. This is not instant. But it is fixable if your team moves with urgency and discipline.

Offensive move with positive review volume

You cannot let a small pocket of vocal detractors define your brand.

The first move is dilution through authentic volume. You need more current, detailed, relevant positive reviews across the platforms AI systems read most often. Not generic praise. Useful feedback that mentions actual experiences, products, service outcomes, and differentiators.

A review generation system should do three things well:

Ask at the right moment: after a successful stay, completed visit, resolved support interaction, or repeat purchase

Reduce friction: one tap, short survey, fast path to public review when appropriate

Capture context: what exactly the customer liked, so future reviews contain rich language

Prompt to Survey handles that operational gap. It turns customer interactions into ready-to-send surveys so teams can collect smarter and convert positive experiences into structured feedback while the moment is still fresh.

For a hospitality group, that could mean post-stay prompts tied to front-desk, dining, or housekeeping moments. For a restaurant brand on Toast, it could mean follow-up requests after a high-satisfaction order or visit. For a clinic, it could mean outreach after a completed appointment and positive internal score.

The point is simple. You need a reliable machine for generating fresh, credible positive signals.

Defensive move with internal capture

Public negativity is expensive. Private feedback is useful.

Your second move is preventing unhappy customers from defaulting to public review sites because nobody caught the issue early. If a guest, diner, patient, or shopper has a poor experience, your system should intercept it, route it, and resolve it before it becomes public evidence for future AI summaries.

The Resolutions Engine is built for this. It automates service recovery by detecting negative sentiment, routing the issue internally, and triggering the next action. That could be an empathetic response, a manager alert, a follow-up, or a make-good workflow.

Here, companies stop treating reputation as an after-the-fact communications problem and start treating it as operational quality control.

A useful service recovery workflow should include:

Immediate sentiment detection

Internal routing to the right owner

Clear response guidance

Resolution follow-up

A second chance to capture revised feedback once the issue is fixed

If your team needs examples of strong public-facing replies after the issue is understood, this guide to https://www.feedbackrobot.com/articles/negative-review-response-examples is practical and usable.

Tip: Your goal is not to hide negative feedback. Your goal is to catch it early, solve it fast, and stop preventable frustration from becoming permanent public narrative.

Build a first-party content moat

Most brands underinvest in the content AI prefers to cite.

You need owned assets that answer the exact concerns appearing in AI outputs. That usually means:

Detailed FAQs

Transparent pricing or policy pages

Location-specific pages

Service pages tied to clear use cases

Case studies with real operational outcomes

Comparison pages that address objections directly. AI tools often prefer canonical, structured, first-party explanations when they are specific and current.

If the recurring complaint is pricing confusion, publish a pricing explainer. If the complaint is slow service, publish your service standards and process improvements. If the issue is feature gaps versus competitors, your content should show what changed and why it matters.

Do not publish vague brand copy. Publish evidence.

How to Optimize Reviews for AI Overviews

Most brands know they need more reviews. Fewer understand that they also need better review language.

That is the heart of how to optimize reviews for AI overviews. AI systems do not only count sentiment. They ingest wording. Your public replies help shape the context window that future answers pull from.

Stop writing empty review replies

Most brand replies are useless for AEO.

Bad example:

“Thanks for the review. We appreciate your feedback.”

That reply adds nothing. It gives AI no meaningful context.

Better example for a hotel:

“Thank you for staying with us. We are glad you enjoyed the late check-in process, clean rooms, and quiet Midtown location.”

Better example for a restaurant:

“Thank you for visiting. We are pleased you enjoyed the tasting menu, attentive service, and quick table turnaround before your theater reservation.”

The improved version does two things. It reinforces positive themes and attaches them to concrete brand attributes.

Use positive replies to counter known negative themes

AI Summaries becomes useful for this purpose. It provides instant insights and sentiment analysis so teams can see recurring complaints and praise themes without reading every review manually.

If your negative AI narrative includes “slow response times,” your positive replies should naturally reinforce speed, responsiveness, and resolution where truthful. If it includes “confusing pricing,” your replies should reflect clarity, flexibility, or improved booking and billing experiences where the operation has improved.

Brands that close competitor feature gaps identified in AI sentiment analysis, such as better loyalty programs or more flexible service, achieve a 32% average improvement in AI recommendation rates within 3 to 6 months (Sight AI).

That means review optimization is not just copywriting. It is also product and service alignment.

For a broader strategic perspective on negative brand mentions in AI, review how recurring public language becomes machine-readable brand positioning.

A practical reply framework

Use this checklist for public responses:

Reflect the experience: mention the service, product, or location detail

Reinforce differentiators: speed, cleanliness, flexibility, empathy, expertise

Address concerns directly: if the review mentions a resolved issue, note the resolution

Avoid boilerplate: repeated generic replies waste the opportunity

Stay honest: never stuff keywords that do not match reality

A useful companion process is to review patterns with an AI summarization workflow such as https://www.feedbackrobot.com/articles/ai-review-summarizer so your team can adjust reply language based on real recurring themes instead of guesswork.

Tip: Every public review reply is training data for future brand summaries. Write it like the next buyer will never visit your website and will only see the AI version.

AI Answer Engine Reputation Management Is Your New Bottom Line

AI answer engine reputation management is not a side project for the SEO team. It now sits at the intersection of marketing, PR, operations, customer experience, and revenue.

If the AI summary of your brand is negative, every channel works harder for less return. Paid media has to overcome distrust. Sales teams enter calls with hidden objections already formed. Location managers inherit reputation damage they did not create. Executives waste time reacting to screenshots instead of steering a system.

The fix is not glamorous. It is operational discipline.

You need one system that helps teams:

Collect smarter by capturing signals across channels

Act faster by resolving issues before they spread

Grow stronger by turning real customer wins into durable public proof

That is why a feedback operating system matters. Radar gives you unified review intelligence. Prompt to Survey helps generate more high-quality customer feedback. AI Summaries gives instant insights and sentiment analysis so teams can spot patterns quickly. The Resolutions Engine automates service recovery so negative experiences are handled before they harden into public reputation damage.

This is the control layer most brands are missing.

One more point matters for leadership. You should not only push positive reviews outward. You should also display them on assets you control. That is where owned social proof becomes strategically useful, including tools like Spotlight: Feedback Wall, which let brands turn strong review momentum into visible proof on their own sites.

The companies that win this shift will not be the ones with the cleverest reputation statement. They will be the ones with the fastest feedback loop.

If your AI narrative is wrong today, fix the customer experience signals feeding it. If the narrative is outdated, publish better evidence. If the issue is inconsistency across locations or teams, operationalize reputation management the same way you operationalize revenue, staffing, and service standards.

Pro-Tip: Stop Stressing Over Bad Feedback The single biggest mistake in AI reputation management is leaving a detailed negative complaint unanswered or responding with a generic, empty "thank you." To turn these high-risk signals into positive training data for AI, you need replies that are empathetic, professional, and rich with context. Use our free AI Negative Review Responder to instantly generate high-impact responses that address specific customer concerns while reinforcing your brand’s commitment to excellence. It’s the fastest way to stop the bleeding and start reshaping the evidence that answer engines use to define your brand.

Generate Your Review/Complaint Response Now →

Frequently Asked Questions About AI Brand Sentiment & Resolution

How can businesses proactively monitor what AI says about their brand?

In 2026, manual monitoring is insufficient. Businesses need AI-powered solutions that connect directly to all relevant feedback channels—from Shopify reviews to CRM notes and even physical QR codes. A robust system, like FeedbackRobot, continuously pulls in new reviews and mentions, identifying emerging sentiment trends and flagging critical issues in real-time, 24/7. This ensures you're never caught off guard by a negative AI summary.

What's the fastest way to resolve negative feedback before AI models amplify it?

Speed is paramount. Leveraging an automated feedback agent is key. FeedbackRobot doesn't just collect feedback; it actively manages the response lifecycle. For negative experiences, it can draft professional, on-brand resolutions instantly and route them to the right team for operational follow-up. This rapid, consistent action shows AI models that issues are being addressed, shifting the narrative towards resolution and customer satisfaction.

Can AI tools genuinely help improve my brand's sentiment in AI summaries?

Absolutely. Modern AI tools, like FeedbackRobot, are designed to transform your operational response to feedback. By streamlining review collection, automating prompt responses, and helping you resolve issues swiftly, they generate a continuous stream of fresh, positive, and resolved feedback. This new, positive data becomes the "evidence" for AI summarizers, gradually improving your brand's digital reputation and ensuring your customers are consistently heard and valued.

How does identifying top-tier feedback influence AI summaries and overall brand perception?

Positive feedback, when amplified strategically, provides a powerful counter-narrative. FeedbackRobot identifies your best reviews and automatically converts them into shareable social media graphics. This not only boosts your brand's direct presence but also provides AI models with more authentic, positive content to synthesize. By proactively showcasing customer delight, you build a stronger, more resilient brand identity that AI summarizers will reflect positively.